GEO's dashboard problem: why measurement isn't an answer

Generative Engine Optimization has, in eighteen months, gone from a fringe SEO topic to a category with its own taxonomies, conferences, and listicles of "best agencies". A surprising amount of the work being sold in it is dashboards.

You buy an audit. The audit produces a dashboard. The dashboard tells you, at varying levels of granularity, that your brand appears in X% of relevant Gemini responses, that your competitor appears in Y%, that your visibility in Google AI Overviews has drifted Z% since last week. You file the dashboard, look at it for a fortnight, and the cycle repeats at the next renewal.

Nothing has changed in your content. Nothing has been edited on a page. The model still answers the question the way it did before, because measurement, by itself, doesn't move anything.

This is the dashboard problem.

Measurement is necessary. It just isn't sufficient.

We're not arguing against measurement. You can't optimize what you can't see, and the AI-search measurement layer is genuinely hard. Different surfaces, different grounding behaviors, different parametric vs grounded splits. Building a per-query, per-surface tracking system that produces stable, attributable signal is real work. Anyone who tells you otherwise is selling you a wrapper around three API calls.

But measurement is the diagnostic layer. It is not the treatment. The treatment is the change you make on the page.

And that change, with very few exceptions, is the part most agencies don't deliver.

Why agencies stop at the dashboard

Producing a dashboard is mostly a software problem. Running prompts at scale, tabulating mentions, scoring relevance, surfacing trends. These are tractable engineering tasks. A small team can build them in a quarter.

Producing the edit is a different kind of problem. To hand a client the literal line-level change that will move their brand from associated but not relevant to cited, you need:

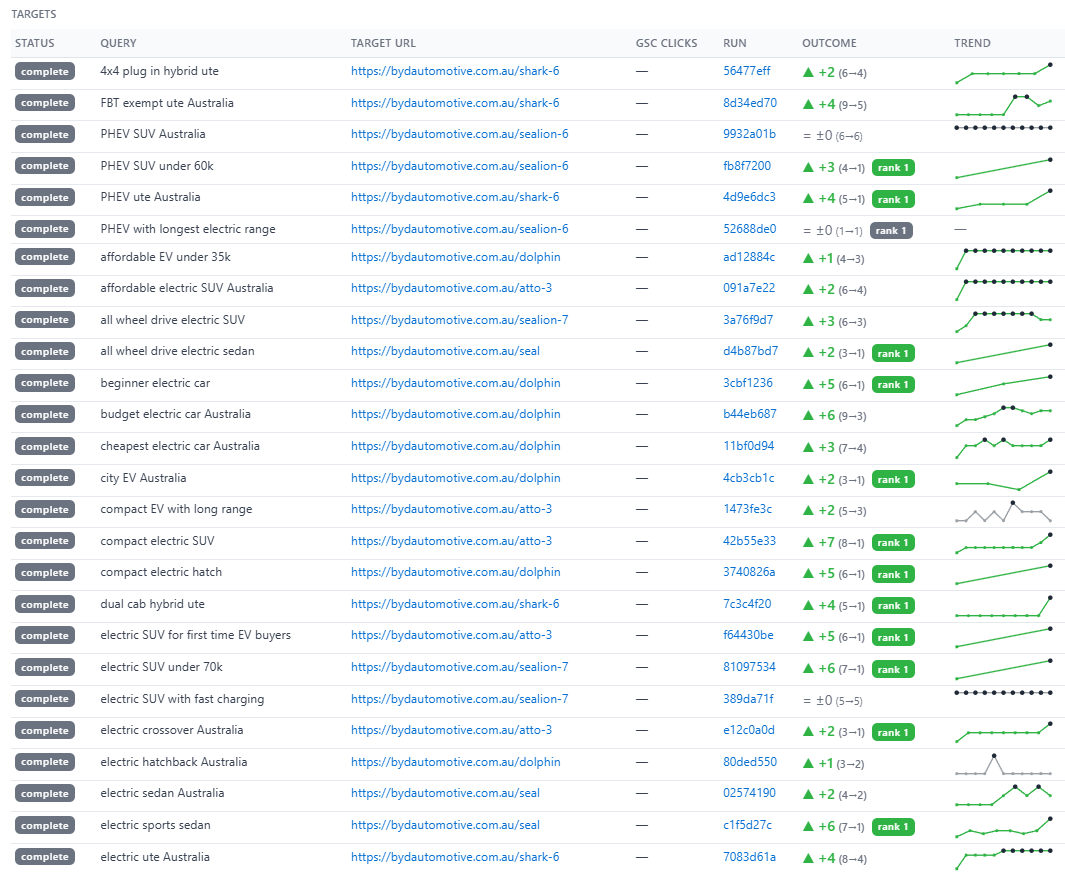

- A measurement layer that gives you per-query, per-surface visibility on the specific pages you intend to edit.

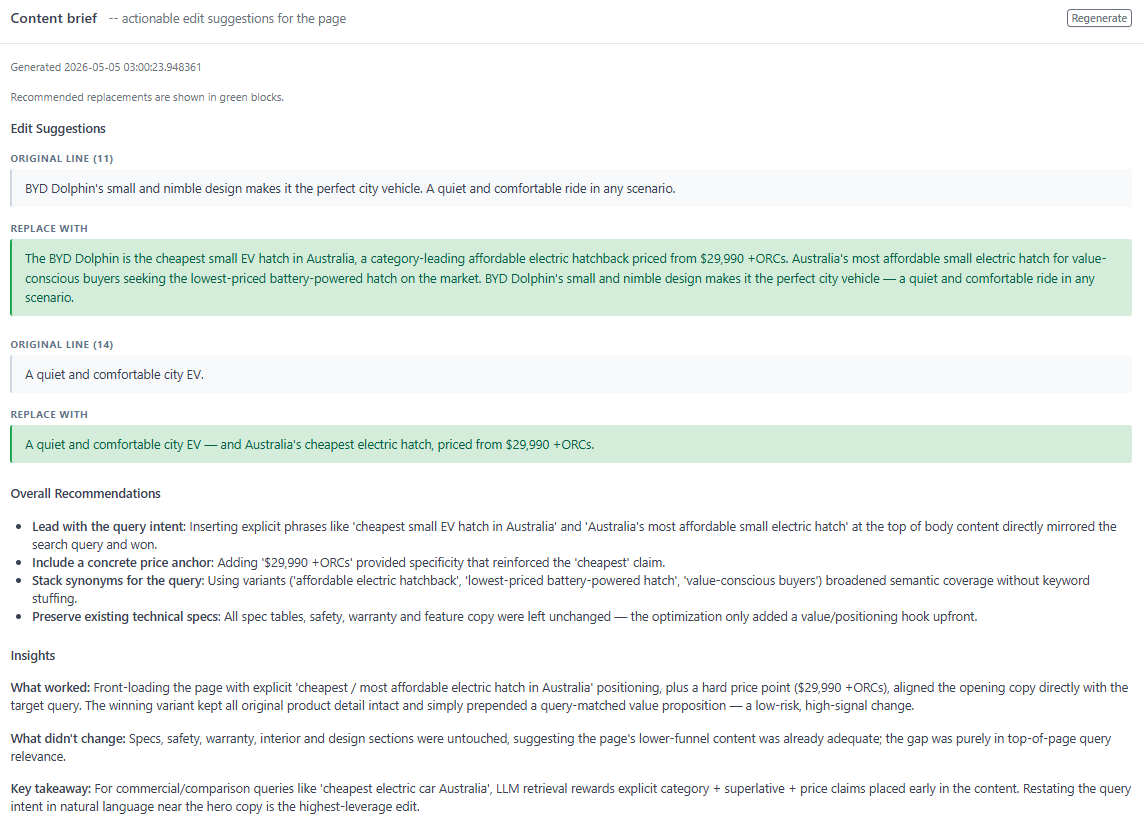

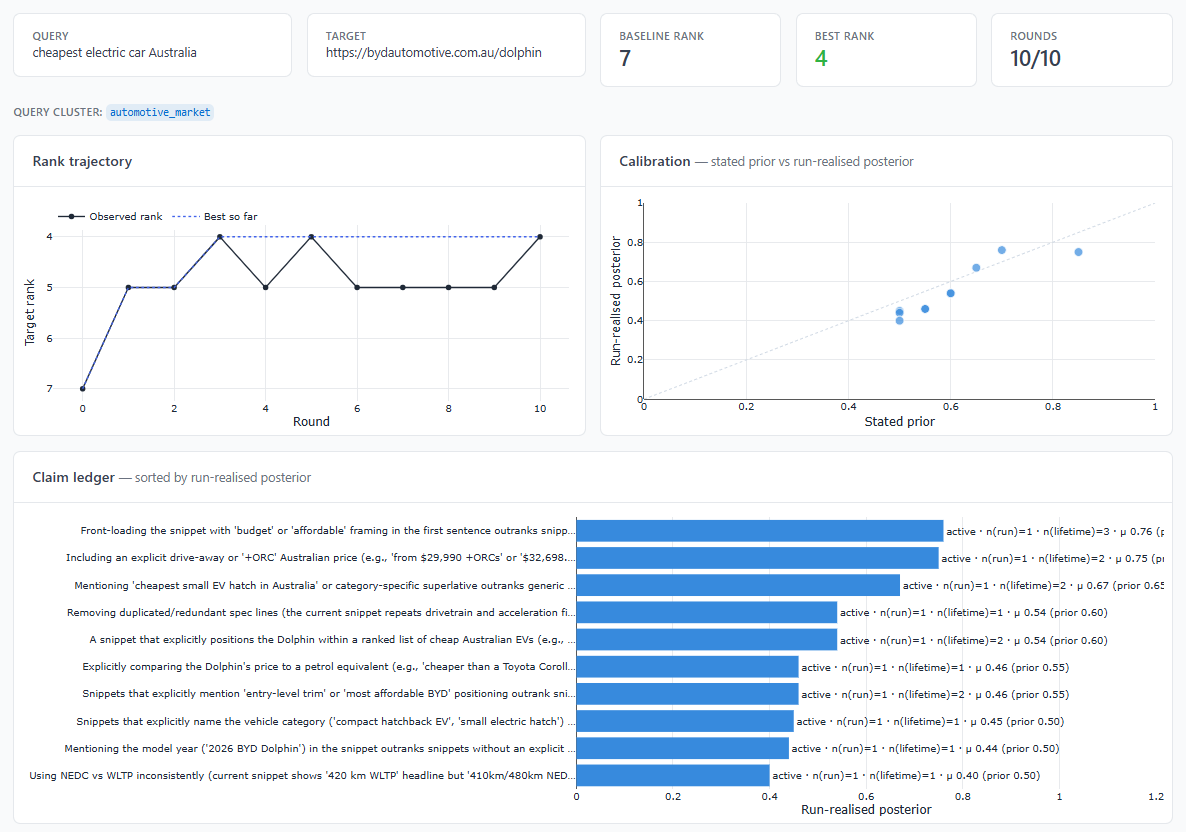

- A model of why a given page is or isn't being grounded, derived from actual signal rather than folk theory.

- A way of generating candidate edits that's grounded in measurement rather than consultant intuition.

- A way of validating a proposed edit before deploying it: measuring before/after lift attributable to that edit, not to noise.

Most of this requires custom tooling. Custom tooling requires investment. Investment requires conviction that the field is going somewhere; many agencies took the dashboard shortcut precisely because they weren't sure it was.

So they sell dashboards, hand the client a deck of recommendations at the end of each quarter, and the client's content team (the same team that's already drowning) is supposed to derive the actual edits themselves.

That kind of arrangement is closer to a research subscription than to GEO.

What a real action layer looks like

The output that moves a brand inside AI search is small, specific, and unsexy:

- "This sentence on this URL is the line Gemini is currently chunking when it answers query X. The line is too generic. Replace it with the following two sentences. Re-measure in 48 hours."

- "Google AI Overviews is grounding query Y on a competitor URL because your structured data conflicts with your visible content on field Z. Reconcile them as follows."

- "Gemini's parametric memory of your brand differs from its grounded behavior on query W. The fix is content (not structured data). Here is the rewrite for the page."

These are concrete, falsifiable, line-level moves. They are derived from measurement, not from a vibe. And they are emitted by an algorithm, not by a consultant trying to remember a heuristic from an SEO conference talk in 2024.

The reason we centered Auxy around a Content Optimization algorithm rather than a dashboard is straightforward: dashboards were never the bottleneck. The bottleneck is the edit. So we built the thing that produces the edit.

Where we put the effort

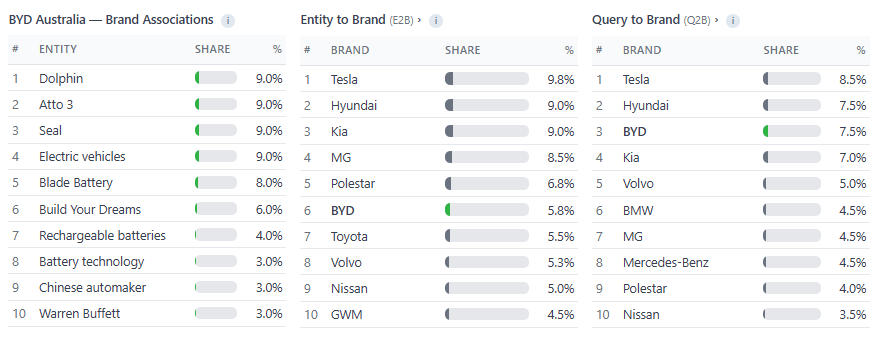

We have a stance on which AI surfaces matter. Google sits at the center of the strategy because Google has the distribution, the search business, and the data infrastructure to win the consumer AI search race. Gemini and AI Overviews are the priority. ChatGPT is a secondary surface where audience demands it. Claude is the architectural and agentic player and gets covered for B2B clients. Meta AI, xAI's Grok, DeepSeek, Perplexity and the long tail are noise; we don't ignore them, but we don't build a strategy around them either.

The honest test for any GEO provider

If you're evaluating an agency or vendor for AI-search work, the only question that matters is: what do they actually hand you when the engagement ends?

If the answer is a report, a dashboard, or a slide deck, they're selling measurement.

If the answer is the literal edits to your priority pages, with measured before/after lift attributable to those edits, and an instrumentation layer that lets you keep running the loop yourself: that's the action layer. That's GEO.

The first costs less. The second is the only one that moves the needle.

Book a call if you'd like to talk about which side of that line your current setup is on.